The difference between options and aesthetics is that an aesthetic can be a function of the coordinates (or parameters in a parametric plot). Global options that concern the figure as a whole (eg title, xlabel, scale, etc) and per-data series options (eg name) and aesthetics (eg. The customization of the figure is on two levels. Those data series are instances of classes not imported by ``from sympy import *``. Plot has a private attribute _series that contains all data series to be plotted (expressions for lines or surfaces, lists of points, etc (all subclasses of BaseSeries)). The figure can contain an arbitrary number of plots of sympy expressions, lists of coordinates of points, etc. This class permits the plotting of sympy expressions using numerous backends (matplotlib, textplot, the old pyglet module for sympy, Google charts api, etc). For interactive work the function ``plot`` is better suited. class Plot ( object ): """The central class of the plotting module. _show = True def unset_show (): global _show _show = False # The public interface # # When changing the minimum module version for matplotlib, please change # the same in the `SymPyDocTestFinder`` in `sympy/utilities/runtests.py` # Backend specific imports - textplot from import textplot # Global variable # Set to False when running tests / doctests so that the plots don't show. experimental_lambdify import ( vectorized_lambdify, lambdify ) # N.B. """ from _future_ import print_function, division from inspect import getargspec from collections import Callable import warnings from sympy import sympify, Expr, Tuple, Dummy, Symbol from sympy.external import import_module from import range from import doctest_depends_on from import is_sequence from. A new backend instance is initialized every time you call ``show()`` and the old one is left to the garbage collector. Don't use it if you care at all about performance. Simplicity of code takes much greater importance than performance. In the case of matplotlib (the common way to graph data in python) just copy ``_backend.fig`` which is the figure and ``_backend.ax`` which is the axis and work on them as you would on any other matplotlib object.

Especially if you need publication ready graphs and this module is not enough for you - just get the ``_backend`` attribute and add whatever you want directly to it.

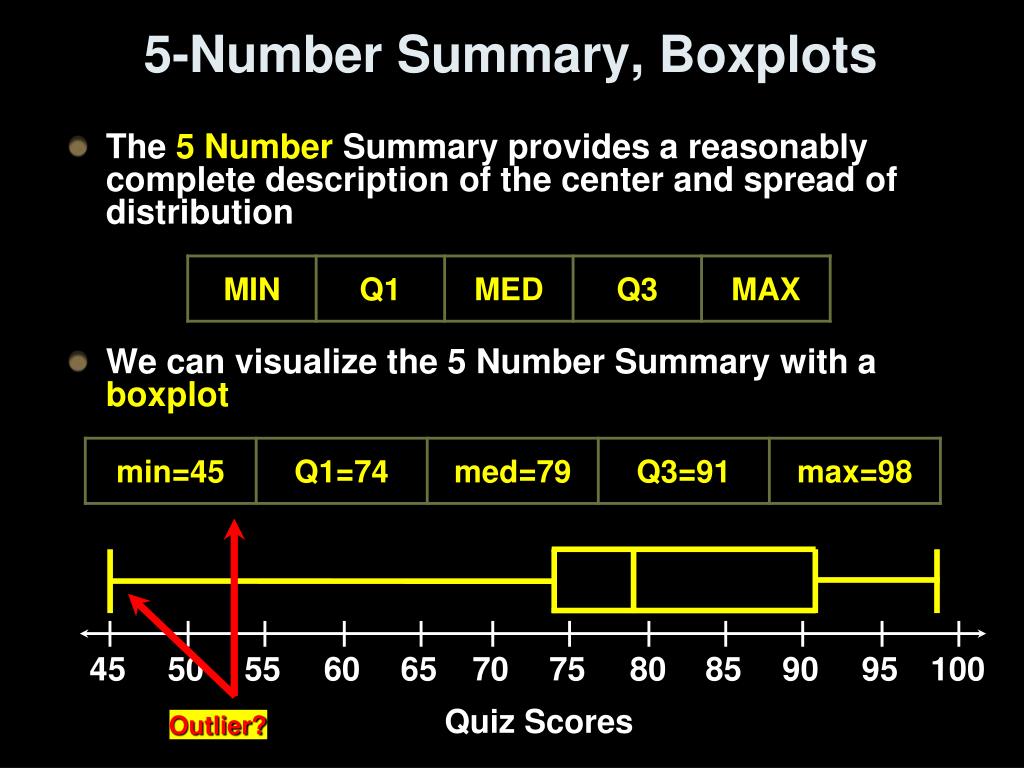

Moreover the data series classes have various useful methods like ``get_points``, ``get_segments``, ``get_meshes``, etc, that may be useful if you wish to use another plotting library. You can get the backend wrapper for every plot from the ``_backend`` attribute. For all the fancy stuff use directly the backend. ``plot_backends`` is a dictionary with all the backends. The data series are instances of classes meant to simplify getting points and meshes from sympy expressions. A plot is represented by the ``Plot`` class that contains a reference to the backend and a list of the data series to be plotted. It starts from -1.1 to factor in -1 as well."""Plotting module for Sympy. 00532466 and m2=3.12375707, we calculate x2 = -(c + m1x1)/m2 for every point x from the xlist which is a list of x values from -1 to +1 in steps of 0.1. The weight from the coefficient matrix is used as m1 and m2 denotes weights w1 and w2 respectively. The line has the equation m1*x1 + m2*x2 + c = 0 Which gives us the x2 = -(c + m1x1)/m2. The model score is roughly 80% and the FP=16 and FN=19. We try to minimize the false positives and false negatives which are the opposite diagonal and at C=1, The above values show the intercept, the slope or weight parameters Theta1 and Theta2. We proceed report the following result for the intercept and weight of the model, and also accuracy of the model along with confusion matrix. In other terms, a high C means "Trust this training data a lot", while a low value says "This data may not be fully representative of the real-world data, so if it's telling you to make a parameter really large, don't listen to it”. A low value tells the model to give more weight to this complexity penalty at the expense of fitting to the training data. Now, C value which is the hyperparameter is basically a high value of C tells the model to give high weight to the training data, and a lower weight to the complexity penalty. Regularization normally tries to reduce or penalize the complexity of the model. Next our penalty parameter decides whether there is regularization and which approach suits us.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed